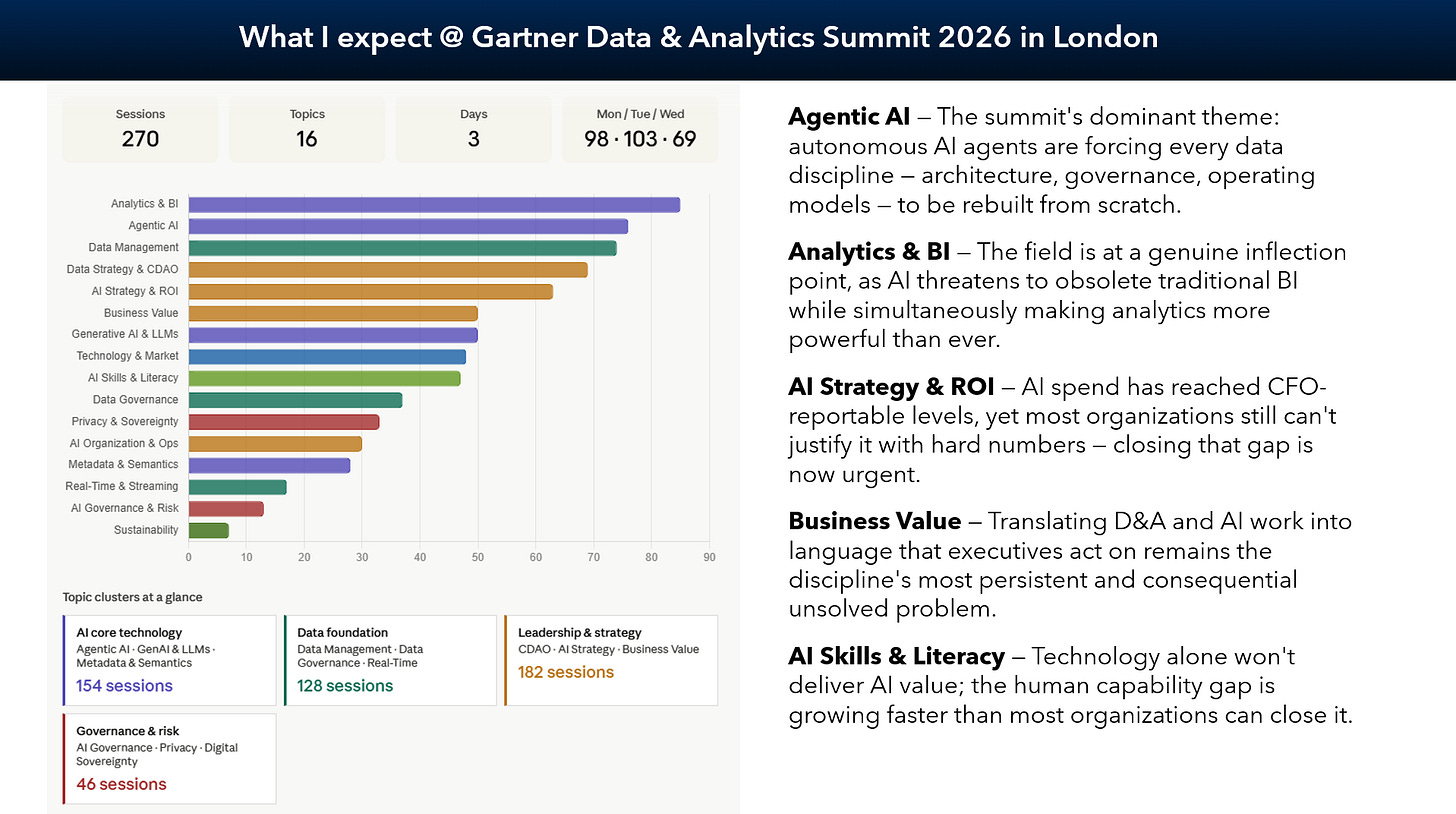

Gartner Data & Analytics Summit 2026 - The Definitive Recap for AI

Strategic insights from the conference with focus on the impact of AI on organizations

As I’ve been at the Gartner Summit this week getting incredible insights on Data & AI from presentations and talks with visitors, vendors and my own customers. Before traveling to London, UK, I formulated some insight I want to extend from the conference experience with the insights gathered.

Data Products are established now. While a clear definition what it means is possibly a different thing. What matters for me typically for a product is business value, clear ownership, and managing it along a lifecycle, not as a project.

Data Contracts are increasingly relevant. They provide semantics for AI, federate governance through quality rules and define business ownership and support interoperability to multiply value of the data.

No booth, no presentation, no talk without AI. AI was everywhere now and while many organizations still miss the right data maturity, business cases and AI-ready data, it is becoming increasingly transformative for organizations and the technology itself.

The human role in AI is critical. But at the same time it is increasingly undefined. Data and business roles are impacted and have to evolve. A huge opportunity lies in the collaboration between human and AI.

There is not the one way, the one strategy or the one kind of data & AI organization. Maturity matters a lot. What is right for you today can be a different thing in 2-3 years. What you need depends a lot on how you are able to create value from. Don’t look for a standard, understand your value.

Context is king to make AI (generative/agentic) work. But it also got clear, no one can really define what context mean. Many vendors showed different approaches. But the concept is young and evolve fast. Is it data catalog, RAG, semantic layer, or just a prompt or text file?

DataGovernance is more important than ever. But please automated, federated and adaptive. It has to be the enabler for creating value.

Strategy & Operating Model is more important than ever - but it changes as AI is accelerating operations, innovation, requirements and is driving to build necessary capabilities faster than ever.

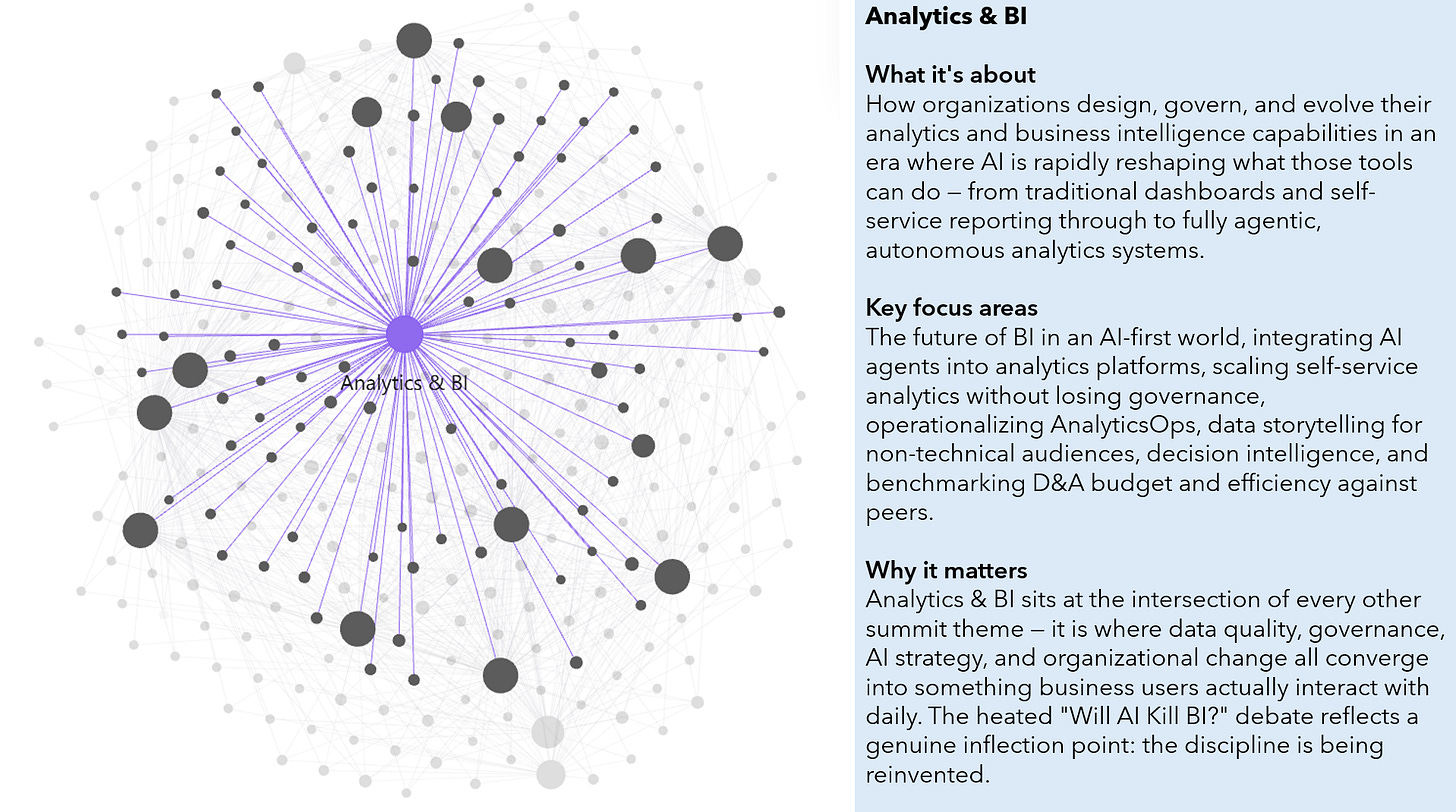

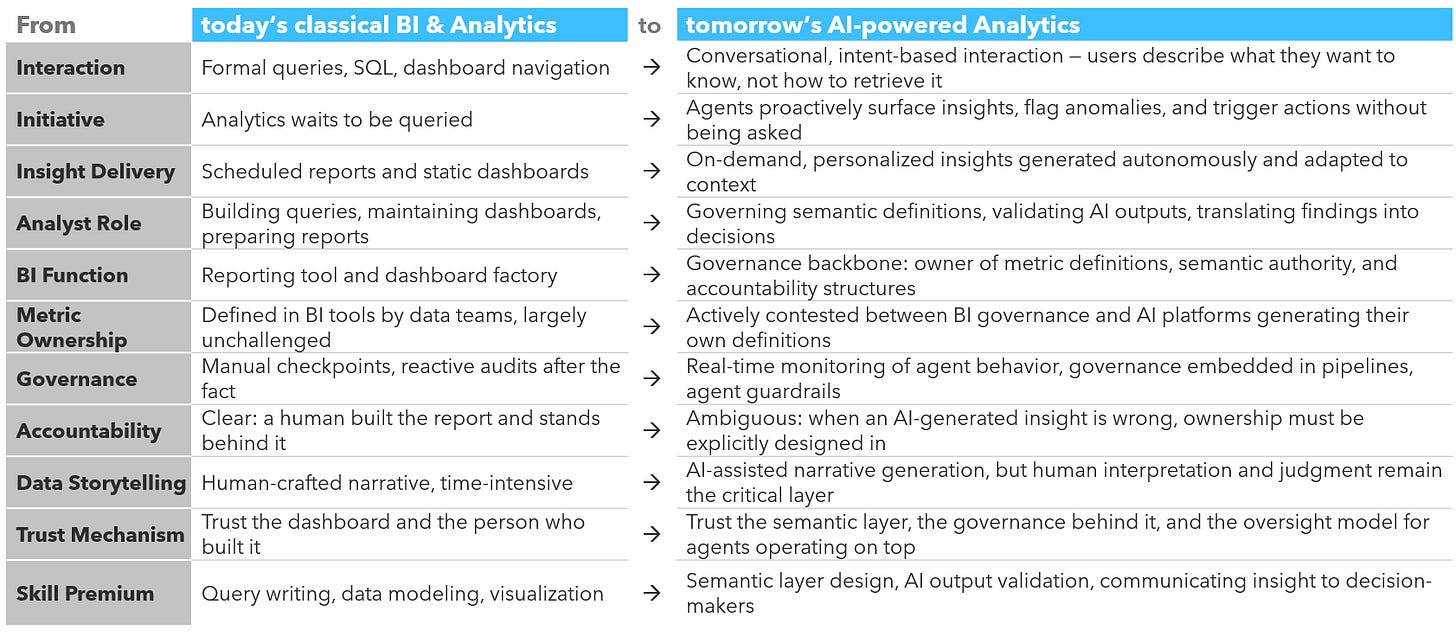

Insights on Analytics & BI

Analytics and BI are in a genuine architectural shift, not a tooling refresh. The governance disciplines BI developed over time, authoritative definitions, data lineage, accountability for published numbers, are becoming more important as AI takes on more autonomy, not less. Most organizations are adopting AI-powered analytics capabilities faster than they are building the infrastructure to make those capabilities trustworthy.

The real contest in analytics is not between dashboards and AI interfaces. It is about who gets to define what “revenue” means when a machine answers the question, and the metric layer that standardizes those definitions across an organization is becoming the most strategically important infrastructure that most people have never heard of.

Vibe Analytics, Gartner’s term for the intuitive, conversational way people interact with data without writing formal queries, is making data accessible to far more people. But accessibility and accountability are different problems, and organizations that adopt AI-powered insight generation faster than they build oversight mechanisms are simply producing wrong conclusions at higher speed.

When insight generation becomes cheap and fast, the scarce resource becomes interpretation: understanding what a finding means for a specific decision, for a specific audience. Teams that invest in this skill consistently outperform those that treat it as optional, and AI tools do not change that calculus, they raise the stakes for it.

AI will not kill BI as a discipline, as 71% of the participants confirm in the one session, but it is already replacing it as an interface. What is not going away is the need for someone to define the metrics, govern the definitions, and stand behind the numbers when a decision goes wrong. Organizations that recognize this are repositioning their BI function as the governance backbone of AI-powered analytics; those that treat BI purely as a reporting tool are likely to find that AI makes its absence very visible.

Insights on Business Value

Data and AI investment is running well ahead of value realization in most organizations. The gap isn’t primarily technical: frameworks, measurement methods, and delivery standards that connect data work to business results already exist and were well-represented at the Summit. What is missing is the organizational discipline to apply them consistently, to measure value before and after, to package data as reusable products, and to communicate results in terms that business stakeholders actually act on.

Most data and AI teams cannot explain what their work actually changed for the business, not because the value isn’t real, but because the tools to trace it from a technical output all the way to a business result are rarely in place.

AI initiatives have a tendency to generate time savings and efficiency gains that look good in progress reports but never reach the P&L, because time saved is not the same as money saved. The distinction between productivity gains that stay invisible on a balance sheet and AI investments that directly move revenue or reduce costs is one of the most important sorting mechanisms in AI portfolio decisions, and most organizations haven’t built it yet.

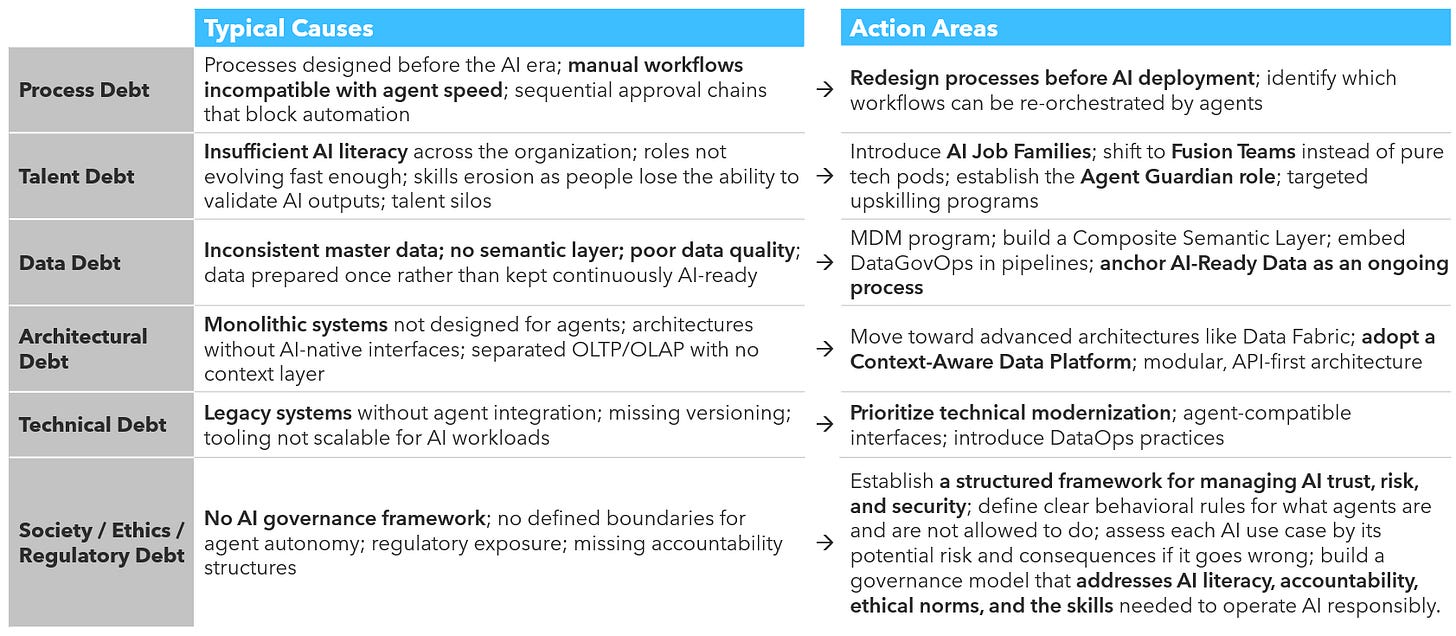

The most underappreciated barrier to AI value is not a missing technology but what Gartner calls organizational debt: accumulated gaps in processes, talent, and governance structures that were never designed for AI-scale operations. Building technical capability while leaving this organizational infrastructure unreformed is why so many AI investments stall between a successful pilot and a measurable outcome.

The next important step is to close the loop between what data and AI teams produce and what the business can verify as a result. That means treating data as a managed product with traceable value, choosing metrics that connect to business outcomes rather than just measuring activity, and building the stakeholder communication habits that turn measured value into continued investment. The organizations that get this right first will have a structural advantage, not because their AI is more sophisticated, but because they have made the value visible.

Insights on Agentic AI

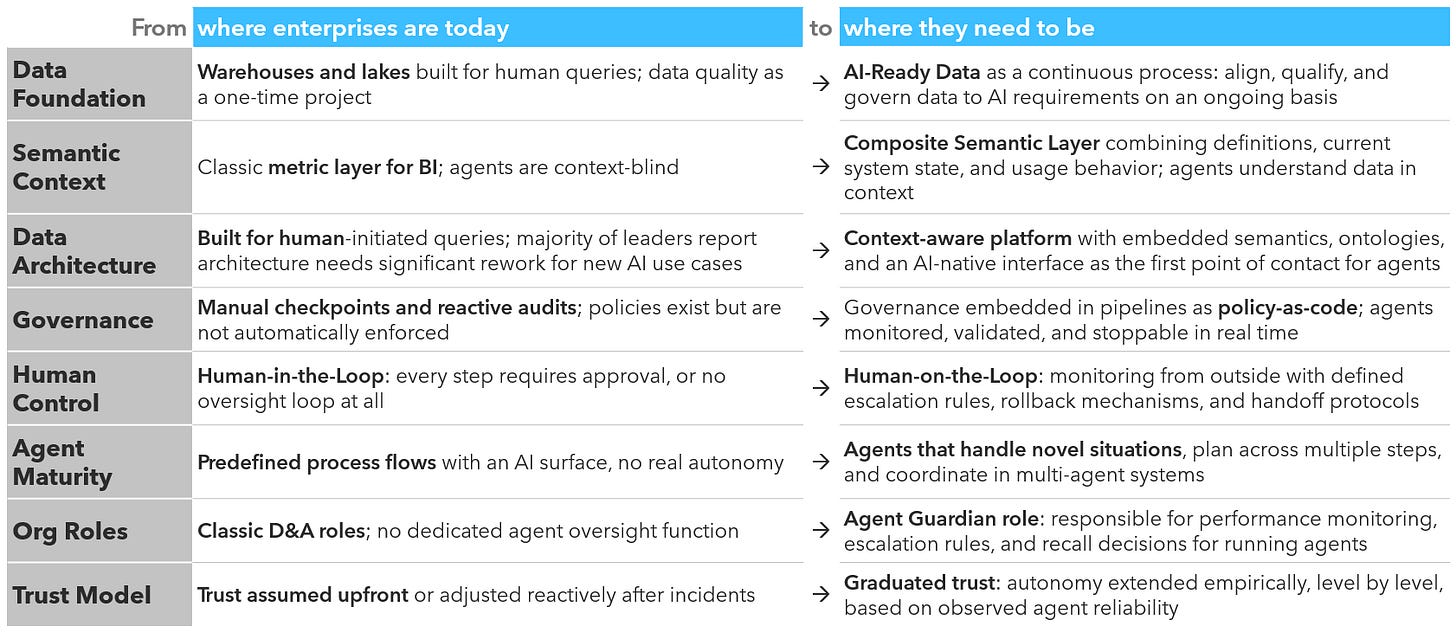

Agentic AI is moving from experimentation into early production, but the foundational infrastructure that makes agent behavior reliable and accountable, including semantic data layers, quality controls, and real-time oversight mechanisms, is being built in parallel rather than in advance. That sequencing creates real operational risk, and the organizations taking it seriously are treating governance and data readiness as first priorities, not follow-on tasks.

Most enterprises are trying to deploy autonomous AI agents on top of data infrastructure that was never designed to support them: the vast majority of D&A leaders report their architecture needs significant rework before new AI use cases can reliably run. The technical components for agentic AI exist; the foundation underneath them in most organizations does not.

The governance discipline that makes autonomous AI safe, monitoring agent behavior in real time, defining when humans re-enter the loop, setting clear boundaries on what agents can decide independently, is still in its early stages at most companies. Only a small fraction of organizations currently feel confident about their AI security and governance, which means the agents being deployed today are largely operating without the oversight infrastructure they require.

Most enterprise AI agents today are closer to sophisticated automations than to genuinely autonomous decision-makers: they follow predefined flows rather than reasoning through novel situations. The gap between what the market calls an “agent” and what can reliably handle complex, multi-step enterprise decisions without human oversight is still considerable.

The technology is ready to deploy, but most enterprises are not yet ready to receive it safely. Agentic AI in production requires data foundations, governance mechanisms, and organizational roles that most organizations have not yet built. The realistic path forward is starting with a narrow, well-governed use case while deliberately constructing the infrastructure that makes broader deployment trustworthy.

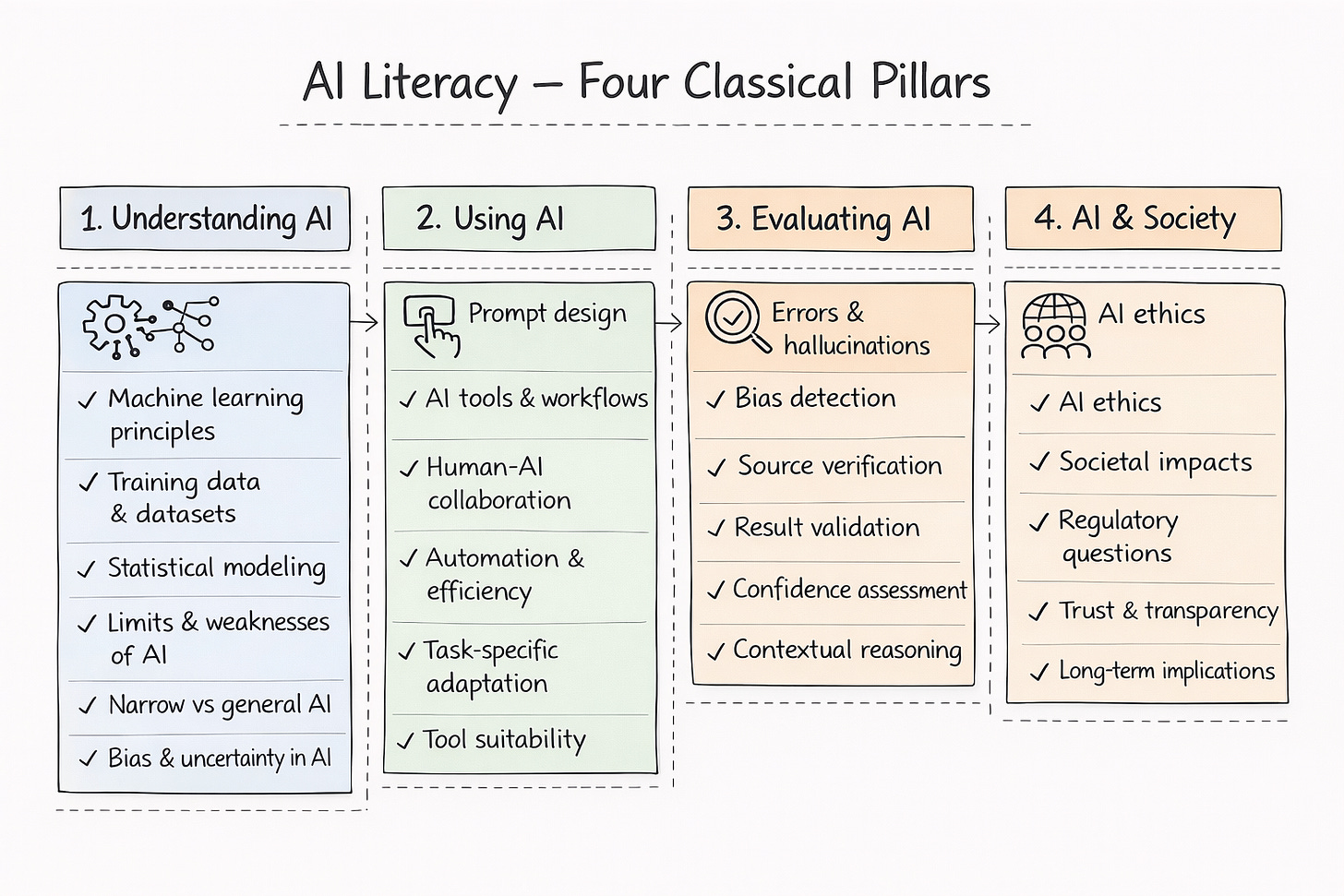

Insights on AI Skills & Literacy

Human capabilities for AI are being built mostly at the wrong level: tool literacy is advancing while the deeper organizational shifts - new team structures, new roles, new oversight models - are not keeping pace. The result is a workforce that can use AI but is not yet organized to govern it, which is the more consequential gap as agentic systems take on more autonomous decisions.

Most AI capability programs focus on teaching people how to use AI tools, but there is the opposite need: as AI handles more routine analysis, the scarce human skills are those AI cannot replicate - asking the right questions, making ethical judgments, and synthesizing across domains. Training for tool proficiency while neglecting those capacities is a structural mistake that compounds over time.

The shift to AI is not just a skills problem, it is an organizational design problem. When work is distributed across human employees, AI agents, and rule-based automation, traditional headcount models stop describing reality, and teams built around technical specialists alone leave out the domain experts, validators, and ethical judgment-holders that make AI work reliably at scale.

The default mode in most organizations is either to approve every AI step manually or to hand over control entirely, but neither works as systems become more autonomous. What is needed is a deliberate, decision-by-decision choice about how much autonomy each use case warrants, combined with a new oversight role that monitors agent behavior from the outside and defines escalation rules rather than intervening in every step.

Organizations build the necessary human capabilities by treating it as three parallel workstreams rather than one training initiative. The first is reorienting skill development toward judgment, synthesis, and ethical reasoning — the capacities AI amplifies but cannot replace. The second is redesigning team structures to deliberately combine business, technical, and AI expertise, and the third is creating formal oversight roles and decision frameworks that define where humans supervise rather than execute.

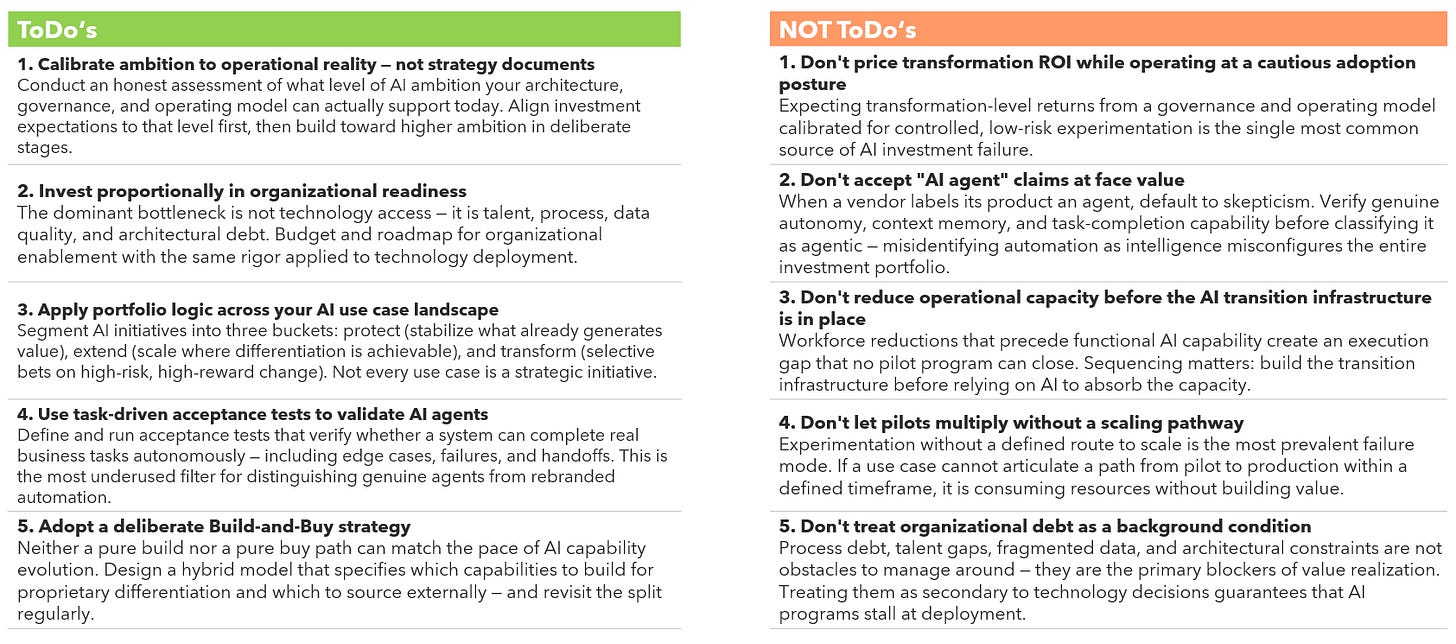

Insights on AI Strategy & ROI

Most organizations are running AI programs configured for the wrong level of ambition: their architecture, governance, and operating model are still calibrated for cautious, controlled adoption, while their investment expectations are priced for full transformation. That structural mismatch is where ROI consistently disappears.

The workforce reductions of recent years happened, at scale, before AI was capable enough to absorb that capacity. Organizations are now expected to deliver more with less before the transition infrastructure is even built, making the move from AI deployment to operational reality the most underfunded and most consequential step in the entire AI journey.

When a vendor calls its product an “AI agent,” the default assumption should be skepticism: the market is saturated with rebranded automation that lacks genuine autonomy, context memory, or learning, and misidentifying automation as intelligence is precisely how AI investment portfolios get misconfigured at scale. Task-driven acceptance tests, which verify whether an agent can complete real business tasks autonomously, are the most underused tool available for cutting through that noise.

Most AI programs today are structurally positioned for the wrong outcome: the largest group of organizations is experimenting without scaling, organizational debt across process, talent, data, and architecture is blocking value realization faster than pilots can generate it, and the Build vs. Buy question has long resolved into “both,” since neither pure path can match the pace at which AI capabilities are evolving. The dominant bottleneck is no longer technology access. It is organizational readiness, and most programs are not investing in it proportionally.

Scaling AI strategically requires, first, an honest decision about which level of ambition the organization is genuinely prepared to build for, and then aligning architecture, governance, and operating model to that level rather than the level that lives in strategy documents. The organizations closing the gap share one discipline: a portfolio logic that protects what already works, extends where differentiation is achievable, and bets selectively on transformation rather than treating every use case as a strategic initiative.

I hope you enjoy the strategic impulses on AI and AI strategy from Gartner this year. The topic is huge and we are all learning. Drop your thoughts about your ideas for making AI work in your organization.

For further insights I already wrote about first insights in this article: Gartner Data & Analytics Summit 2026 - Insights

My experience is in public health trying to connect client demand(requests signal problems they need our help with), complaints, and knowledge outputs to demonstrate system-level value. Currently doing it manually in Excel as a proof of concept before proposing a model-driven architecture. The manual version already surfaces patterns that havent been formally connected before which is both the argument for building it and the evidence that it works.

Have you ever explored enterprise reporting and mapping business value with models?